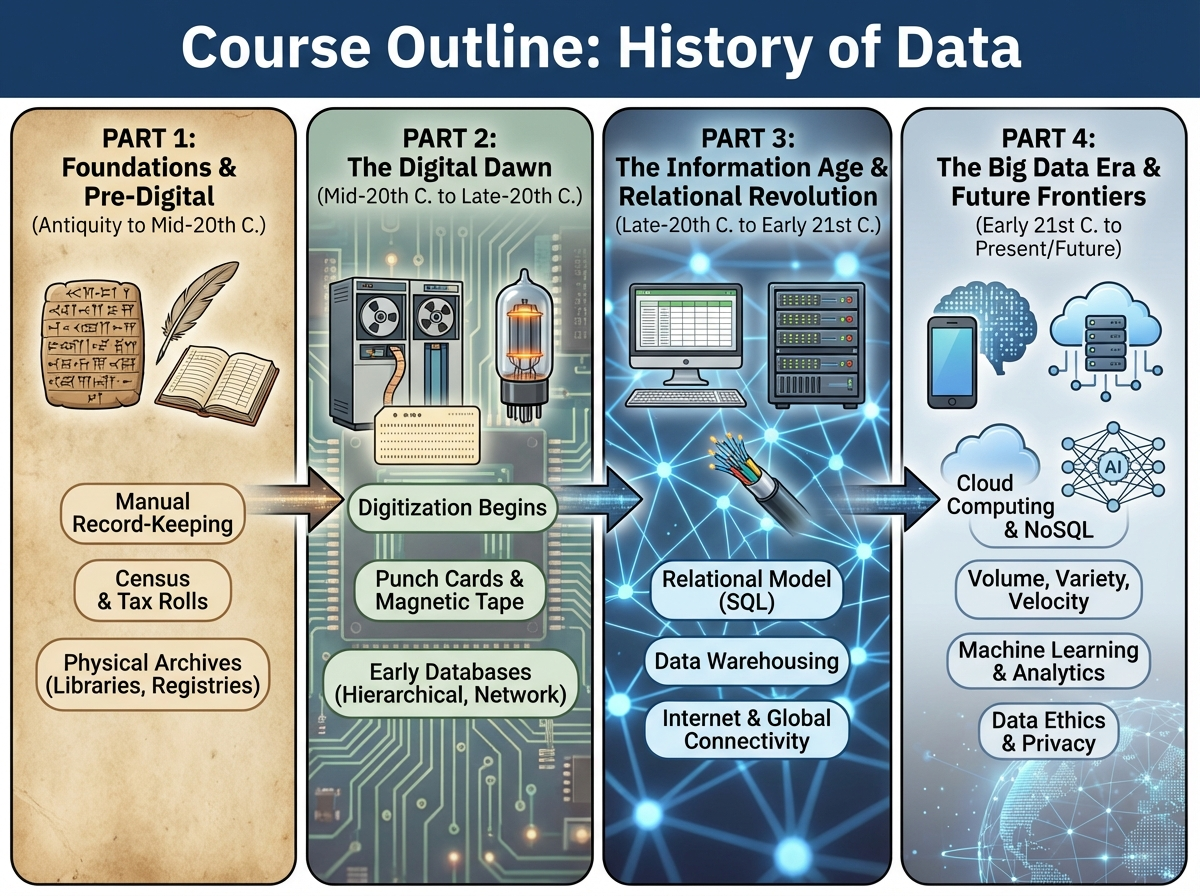

The History of Data

FREE Intro | ~2 hours

From punched cards to Lakehouses and AI-ready data platforms: understand the evolution of data and why the future is starting now.

What you'll gain (free entry point):

- ✅ Key milestones in data technology (1940–2025)

- ✅ Why relational databases, Hadoop, cloud, and lakehouses emerged

- ✅ The shift from batch → real-time → AI-driven data

- ✅ Lessons from the past you can apply today

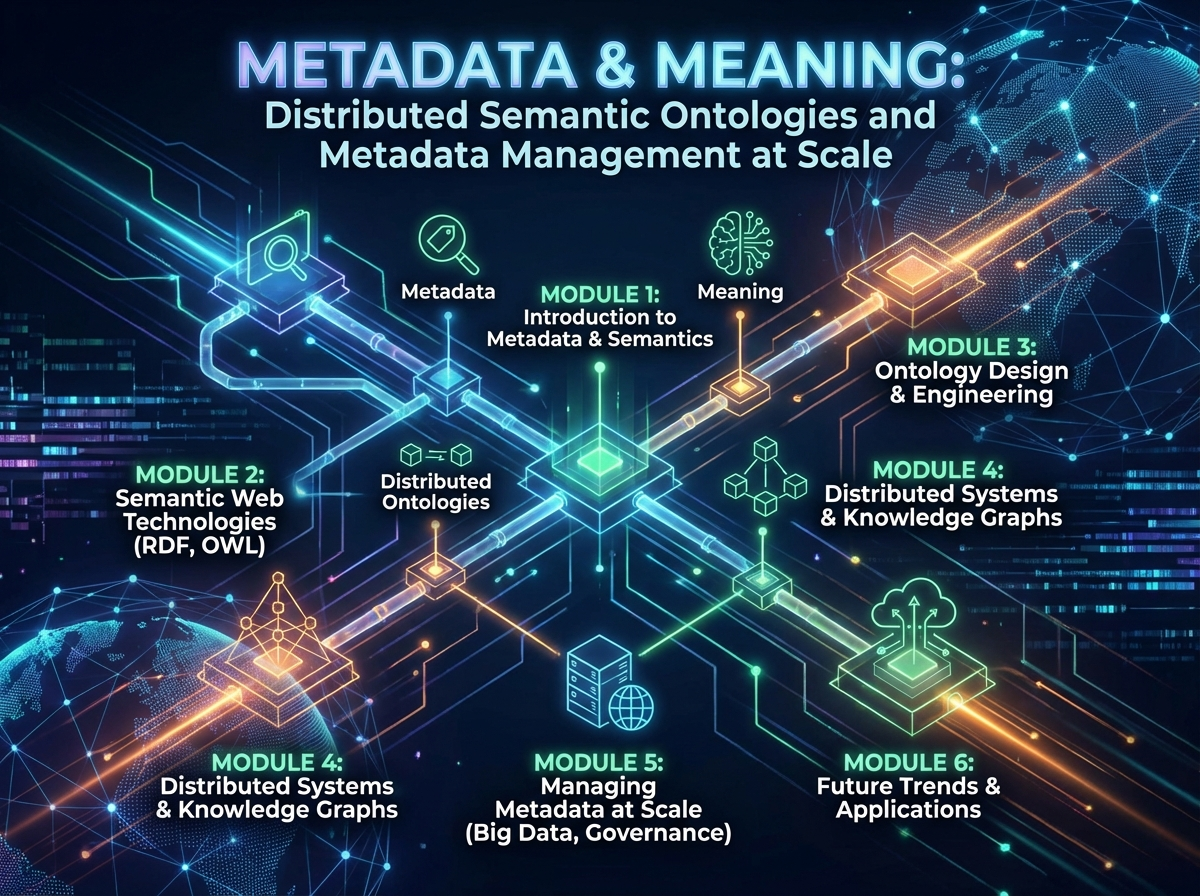

- ✅ Context for modern architectures (Lakehouse, Semantic, etc.)

Ideal as a first step for anyone serious about data engineering or architecture.

Outline (~2 hours total):

- The early days: punched cards & mainframes

- The relational revolution & SQL

- Big Data & the Hadoop era

- Cloud, data lakes & warehouses

- The rise of Lakehouse, AI & semantic data

- Summary & what it means for you

Start completely free – no credit card, no catch. Discover the history behind your future work.